Hi! Hope you are not too overstimulated by work today, let’s grab a cup of coffee and intrigue you with some fascinating facts:

Theodore Roosevelt was once shot during a campaign speech and finished the speech anyway. The bullet stayed in his chest for the rest of his life.

William Henry Harrison served the shortest presidency in U.S. history at just 31 days, dying shortly after he gave a two hour inaugural speech in freezing rain without a coat.

Those were some pretty hardcore “food for thought” facts, which definitely are called for the occasion of Presidents Day. And now let’s get started with our data stories right away!

Today’s special:

AI’s Energy Footprint: Is the electricity consumption of data centers too much for the national grid…

Waging For Bare Minimum: The U.S. federal minimum wage is still stuck on $7.25 since 2009!

Retire In Peace: Are Americans even thinking about saving up for their retirement?

Guzzling Down The Grid

When everybody and their mother started reporting about the drinking habits of data centers, gulping a gazillion litres of water for their cooling systems, they didn’t make a note of their eating disorder. According to Forbes, data centers can eat up to an upwards of 4% of national electricity, which is enough to power New York & Chicago combined. With these outlandish energy demands the projected energy footprint of these data centers can reach anywhere from 9-12% by 2030.

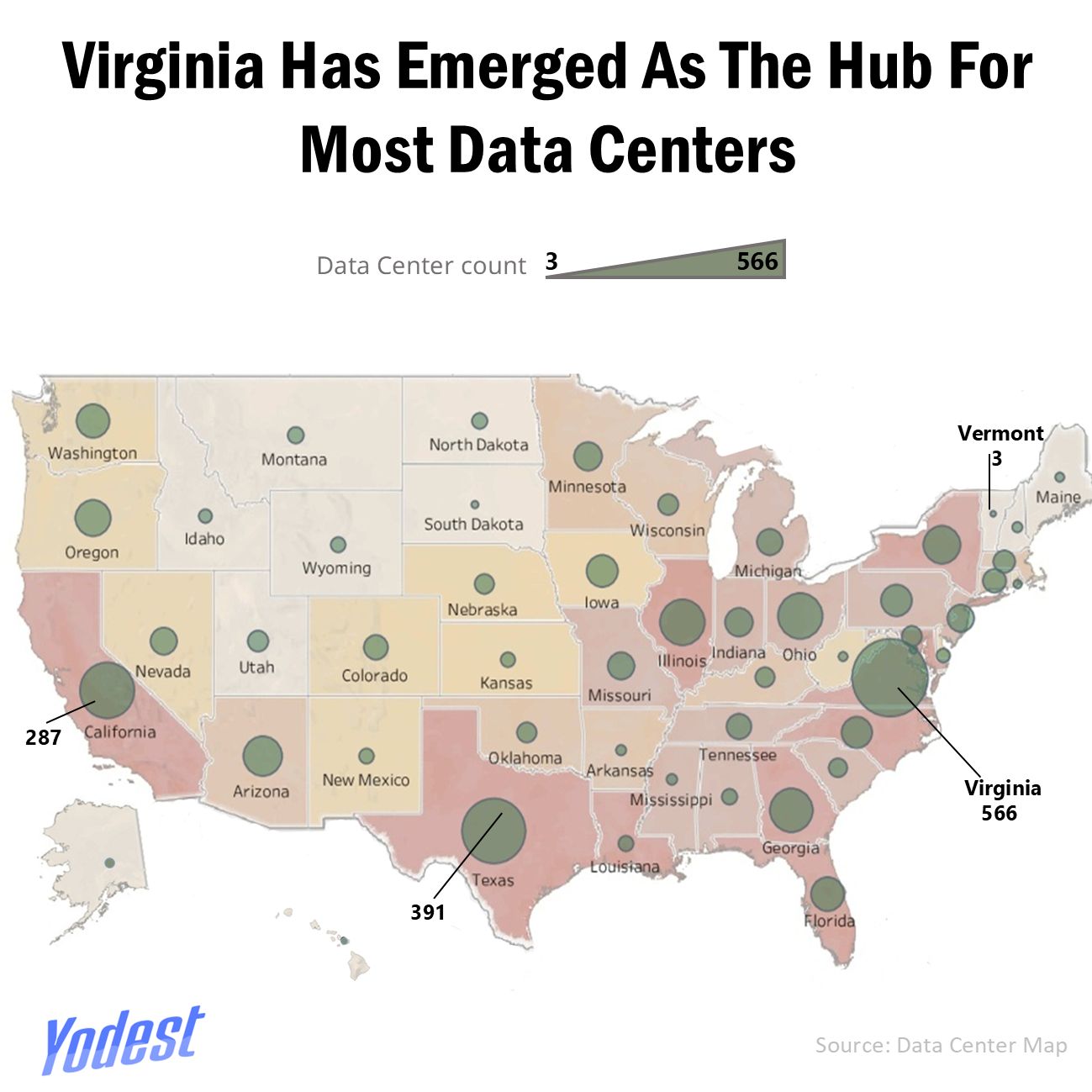

These numbers seem trivial until you bring in the bigger picture, because for the first time since 2007 the U.S. power demand has increased for 4 consecutive years, due to the rapid expansion of data centers. All thanks to the roughly 4,000 and counting data centers nationwide, with a big chunk clustered around Virginia, Texas and California.

Appetite For Catching Tails

Even with a strong year of energy production and natural gas output, there is still a hint of concern since a large chunk of the increasing demand comes from computing facilities, including data centers, as per Tristan Abbey (Administrator of the U.S. Energy Information Administration). Add that to the fact that the current AI infrastructure in place isn’t anywhere close to executing a smooth job to meet these rising demands.

As Tom Falcone, president of the Large Public Power Council best puts it, “This (energy demand) came out of nowhere. Pre ChatGPT, we weren’t seeing this kind of load growth. It is an entire supply chain issue, involving utilities, industry, the workforce, and engineers, who don’t just fall out of trees because you want them.”

Collaterals Of Ambition

As per data from 2025, the United States consumed roughly 4,187 billion kWh of electricity, out of which 1,508 billion kWh was used for residential consumers, 1,482 billion kWh for commercial customers, and 1,055 billion kWh for industrial customers. There are mainly three sources of electricity generation that being natural gas, coal, and nuclear which account for 75% of all electricity production. And since, data centers are burdened with the curse of Atlas, running 24/7 makes it so that even the greener data centers require the backup power of said sources, adding extra strain to an already high demand.

With record high demands also comes inflated prices for the locals, with Bloomberg reporting that wholesale electricity now costs an absurd 267% more in a single month around areas with excessive data center activity, than it did half a decade ago. All in all, with an incompetent system in place and rapid-fire dumping of revenue from large conglomerates to achieve their tech ambitions, it’s a complete haywire for the regular consumers as well as the climate, and a blurry grey future ahead for the overly ambitious AI-companies.

Minimal Wage Maximum Labor

The U.S. federal minimum wage has been stuck at $7.25 per hour since 2009, meaning nearly two decades of stagnant earning power while living costs soared. That figure is the legal floor under the Fair Labor Standards Act, meaning any worker living in a state that hasn’t acted independently is still bound to that number, regardless of inflation or productivity gains. Data from the U.S. Department of Labor confirms that many states have chosen to go above the federal baseline and have increasingly stepped in where Congress has not. Some are modestly higher like Arkansas at $11.00/hr or Alaska at $13/hr while others have pivoted toward what was once considered an audacious goal.

This divergence has bifurcated America into two sections: one where wages rise incrementally with economic conditions, and on the other hand where pay remains static while expenses do not. The result is a labor market where geography, rather than skill or effort, has all the power in determining whether minimum-wage work can cover basic needs. Across much of the country, minimum wages now range from about $8.75 to nearly $17.95 in states like California, New York, and the District of Columbia, while parts of the South remain tethered to the $7.25 federal floor.

Waged Like A Wager

The divide in state-by-state split in minimum wage policy sharpened on 1st January 2026, through a wave of state-level minimum wage increases. This rising tide collectively boosted pay for over 8.3 million workers, as per the Economic Policy Institute. For the first time, EPI’s analysis indicates that there are some states now in which workers are living with minimum wages surpassing the federal floor as compared to states still buoyed by the $7.25 figure, marking a meaningful structural shift in U.S. wage policy. In parallel, data from Department of Labor shows a growing number of states like Delaware, Massachusetts and Illinois are reaching or formally committing to a $15-per-hour minimum, a benchmark that once seemed politically unattainable.

Still, the math doesn’t fully add up. Even at the figure of $15 an hour, it often falls short in states with high housing and healthcare costs. For many low-wage households, the shortfall is covered with credit cards. When pay checks don’t stretch far enough, essentials like food and bills are charged instead pushing families deeper into pay check-to-pay check living and rising credit card debt. NELP’s research highlights that wage gains, while real, frequently trail the pace of essential expenses, limiting how far these increases go in restoring affordability.

Can’t Retire The Jersey

One look at the Survey of Consumer Finances, and it’s not hard to understand that the punch line isn’t how Americans have saved, it’s how unequally distributed their retirement savings are. For adults under 35, the average retirement balance sheet tallies at $49,130 and yet the median is merely $18,880. This unabridged gap is convincing to show that younger workers don’t have enough to spare, let alone save. This same pattern has disseminated to other age brackets, too.

Americans between the ages of 35-44 fall under an average of $141,520 with a median of $45,000, while those at 45-54 come at $313,220 with $115,000 as the median. By the time Americans are 65-74, they arrive at $609,230, the median at $200,000. For those 75 and above, the average rounds off to $462,410, while the median is only $130,000.

This recurring divergence between average and median is cutting through the noise. A small section of savers has raised the bar of averages high above where more Americans can actually land. Fidelity’s data corroborates this: Boomers, for example, average $249,300 in their 401(k)-balance compared to a mere $13,500 for Gen Z. This large divide shows the headline average number conceals more than what it reveals. The same shift is consistent in IRAs, where boomers are averaging $257,002 and just $25,109 for millennials. Early contributors have inflated the averages, while in reality, Americans are sporadically or merely saving, rendering the typical saver leagues below the ideal number.

Cards Stashed Differently

The disparity doesn’t begin with big balances; it emerges with unequal room to save. Many young workers start with no financial runway at all. Often, succumbing to pressure from student loans, rent inflation and low wages, even small savings seem far-fetched. As Vanessa N. Martinez, CEO of Expressive Wealth, puts it, “That’s one of the biggest struggles for some people, when they see a big number, that seems scary.”

According to Gallup, the number of Americans with a retirement account to begin with is at 59% but participation is not inclusive because of income. Only 83% of households making $100,000 or more save, as opposed to 28% of those earning under $50,000. Education runs parallel with this shift: 81% of college-educated adults save for retirement and only 39% of those without a degree.

Extra Data Bites

Guess The Bite

Which U.S. state drinks the most amount of coffee per capita?

[Answer Below]

Feel free to share your feedback, or share any topic suggestions that you want us to cover!

Want some more data stories?